For the first time since iPhone camera resolution increased from 8MP to 12MP with the iPhone 6S, Apple has finally included a larger sensor: the iPhone 14 Pro and Pro Max have a new 48MP main sensor.

That’s a big leap. The new sensor is approximately 60 percent larger than the previous standard 12-megapixel sensor, allowing it to capture more light than the previous sensor. A sensor consists of millions of elements, each corresponding to a pixel. Apple’s 48MP sensor has four times as many elements as the 12MP sensor — 6048×8064 pixels versus 3024×4032 pixels. Although the new sensor is larger, each element captures slightly less light than the previous sensor. This combination is said to improve detail, but would typically also increase image noise in lower light.

Reading tip: iPhone 14 and iPhone 13 camera comparison

Apple has set out to avoid this in normal recordings. The 48 MP sensor produces a 12 MP image with its own camera app and in third-party photo apps. As with all photos taken with an iPhone or iPad, multiple shots are combined invisibly and undergo processing called the Photonic Engine on iPhones 14, which replaces the Neural Engine. The Photonic Engine intervenes earlier in processing than the previous algorithm, and Apple claims this helps better apply machine learning-based processing to low-light images.

Shoot at 48MP

Those 12 MP images might be great; in the test they are too! But we have a 48 MP sensor in our hands and we want to make full use of it. You can turn on Raw mode in the Camera app to capture a very high-resolution, less-processed image that goes far beyond what the iPhone was capable of before. Because the 48MP sensor doesn’t benefit as much from Apple’s digital photography, it has drawbacks beyond the storage and processing power required to capture and edit those images.

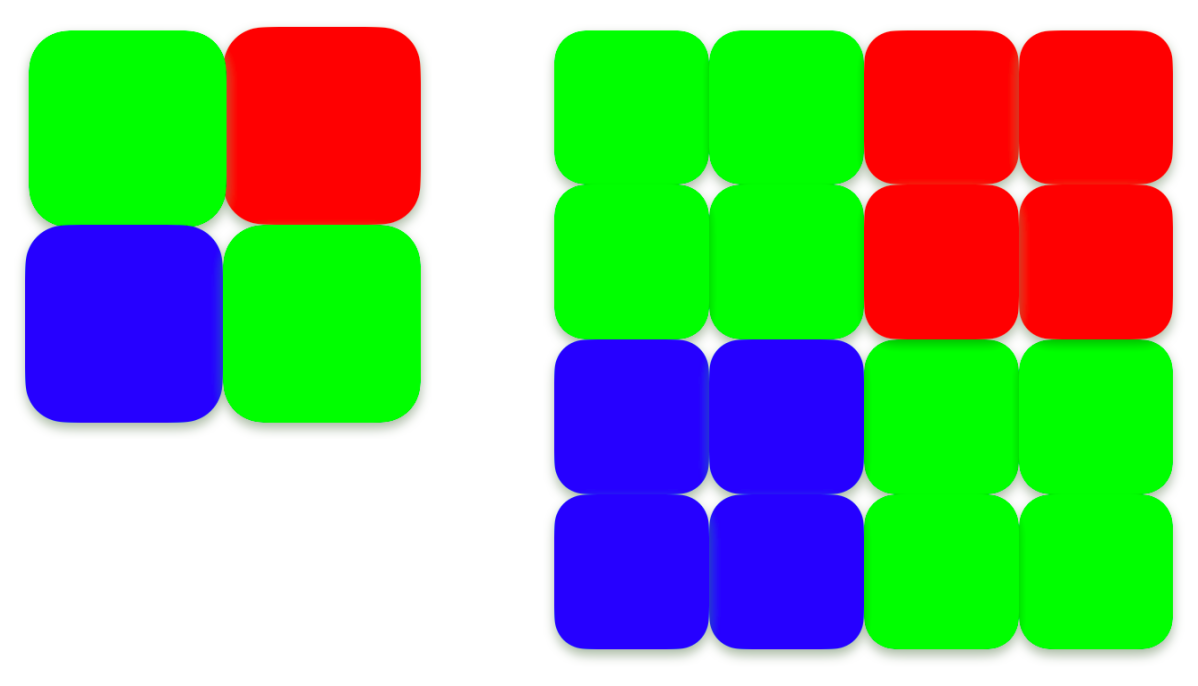

One of the reasons for this is that Apple has increased the density of the sensor elements in the camera. The sensor elements all have a red, green or blue filter to separately detect the intensity of each of these light components. The color is not captured directly, but interpolated via neighboring pixels in the image of any digital camera, including that of the iPhone. The ratio in a sensor is two green elements for every red and blue because green filtered light captures much more of the luminance or tonal gradations that our eyes perceive than blue or red.

The oversized quad elements of Apple’s 48MP sensor are collections of small two-by-two element matrices that filter the same color. As a result, the 48MP raw image captures more detail overall, but effectively less differentiation between colors in each resulting 4×4 pixel area – about as much as a 12MP sensor in a 2×2 pixel area. This could result in blurry color reproduction compared to a sensor that maintains the finer color pattern of the elements.

To shoot in Raw mode, enable the feature below “Settings > Camera > Formats”by enabling Apple ProRAW and ensuring 48MP is selected for ProRAW resolution. In the camera app, tap the “Raw” button in the top-right corner. This temporarily removes the slash through the word in the caption and you can now capture raw images. Above Settings > Camera > Preserve Settings” and “Enable Apple ProRAW” you can permanently decide for or against the use of raw data. Every time you open the camera app, it remembers your raw selections from previous use.

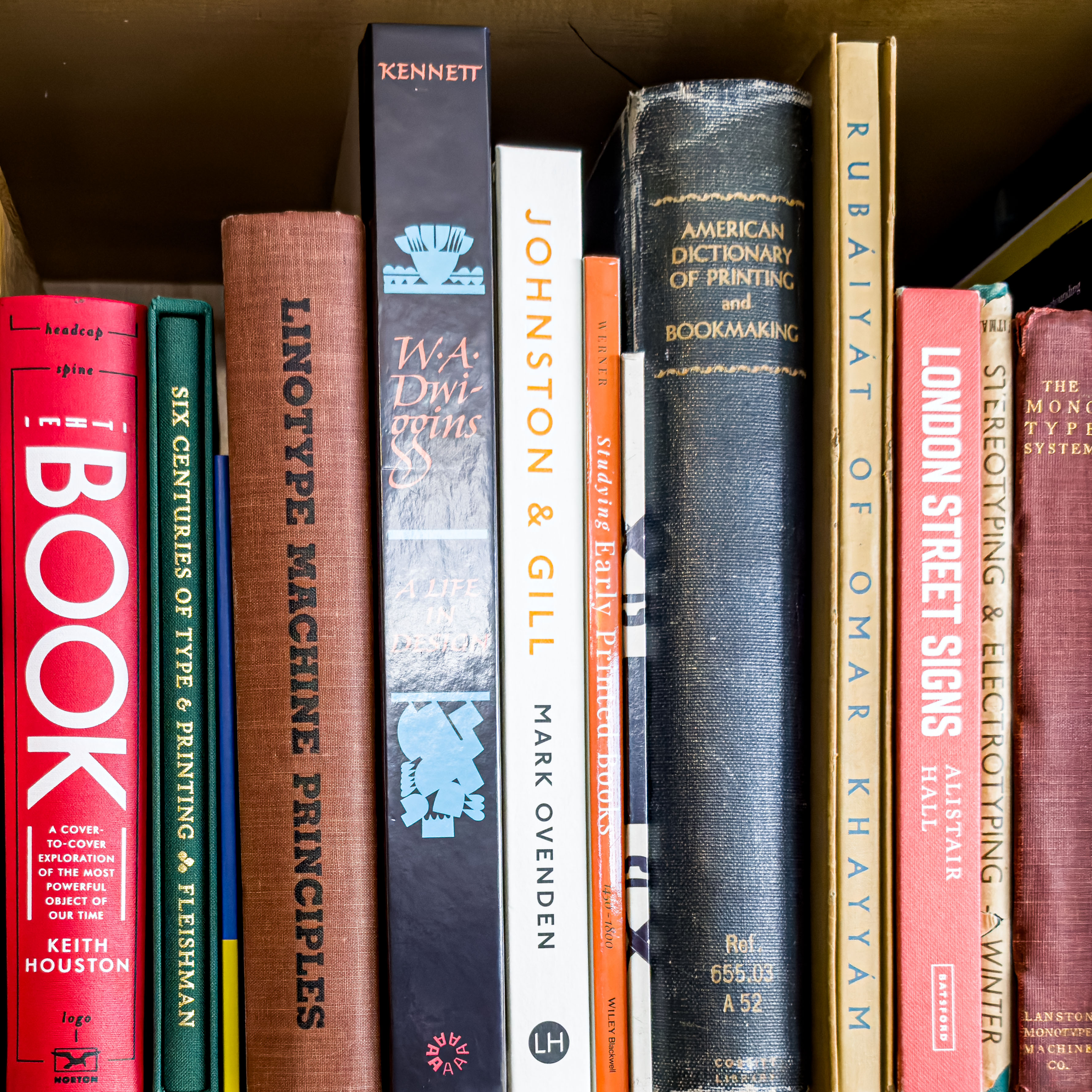

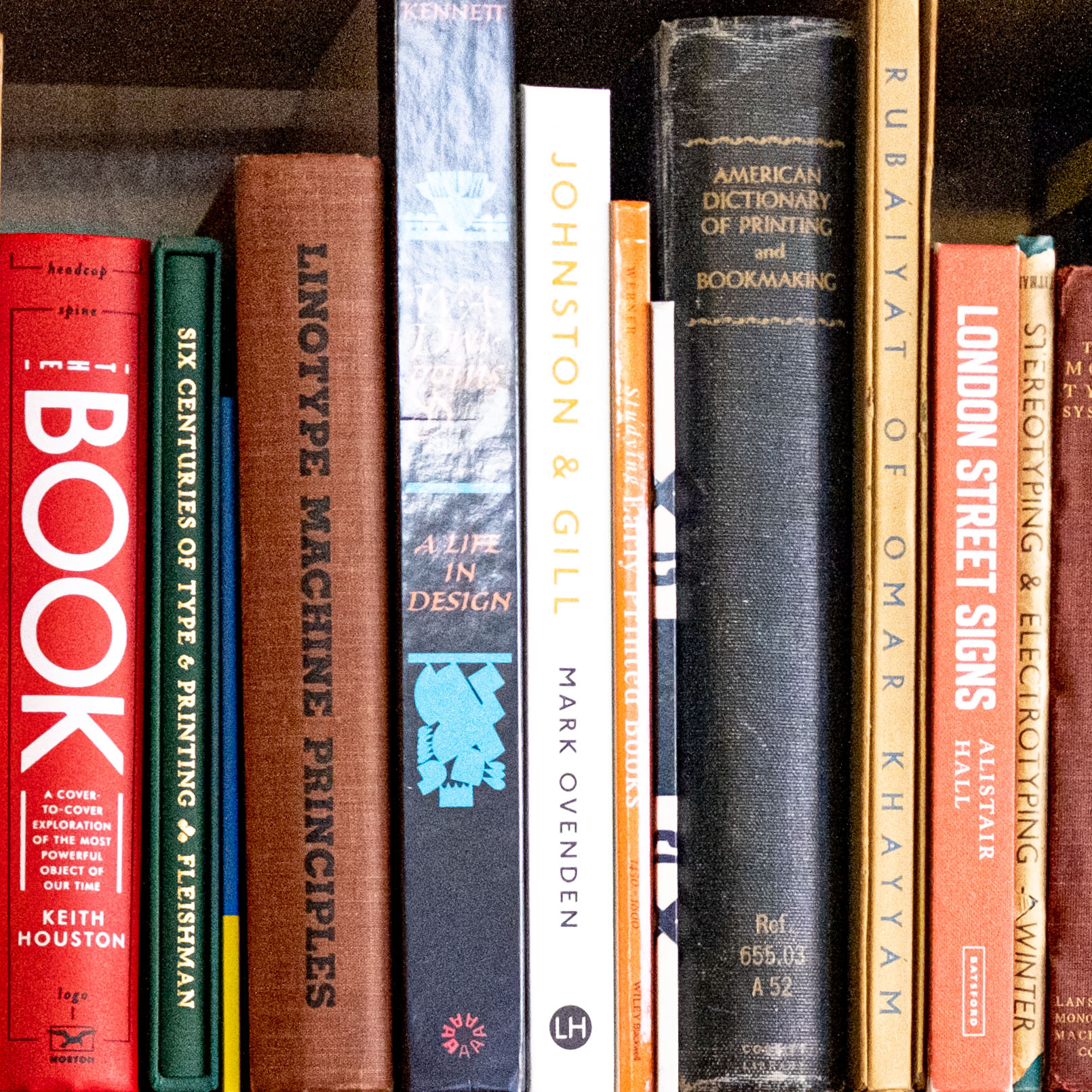

iPhone 14 Pro vs. Fujifilm X-E4 system camera

To test Apple’s 48MP Raw captures, we took a series of photos at different settings with an iPhone 14 Pro and a mirrorless Fujifilm X-E4. The Fujifilm camera has a 26.1 MP sensor that offers a maximum image size of 6240×4160. We used a 27mm f/2.8 lens, which has a 40mm equivalent to match Apple’s conversion below – somewhere between Apple’s main and telephoto lenses. We adjusted the images using Adobe Lightroom for exposure and balance.

The X-E4 costs 1,099 euros with the 27mm lens (XF27mmF2.8 R WR). (click here for the shop)while the triple-camera iPhone 14 Pro and Pro Max are available for $1,299 and $1,499, respectively (click here for the shop):

- Main lens: Comparable to 24mm, f/1.78.

- Ultra Wide Angle: Comparable to 13mm, f/2.2.

- Telephoto: Comparable to 77mm, f/2.8

Apple uses the 35mm equivalent label for its lenses, a method that compares the scope of a scene captured on a sensor to traditional 35mm film photography. This enables a comparison of different camera types. Apple claims 0.5x, 1x, 2x, and 3x three-lens factors on the Pro models, as iOS simulates a 2mm or 48mm equivalent lens by color-subsampling the main lens: it effectively crops a 12- MP image from the center of the 48 MP sensor. We also tested some of these 12MP 2x shots.

photo comparison

Due to the element color pattern mentioned above, a 12MP crop should appear less sharp than an image captured with a native 12MP sensor, and also less sharp than a 12MP crop from a larger mirrorless or DSLR -Sensor of the same area at the same distance. To test the two, we shot the same scenes with the iPhone 14 Pro and the X-E4 at 1x and 2x.

The iPhone 14 Pro compares remarkably well to the Fujifilm X-E4, especially in low light: there’s less noise and more detail is preserved. In almost all cases, the iPhone 14 Pro offers a comparable or better result than the Fujifilm X-E4 in both the 48 MP raw shot and the 12 MP 2x zoom.

The advantage of the Fujifilm lies in the numerous setting options for the shutter speed, the physical aperture and the simulation of the film speed as well as in the interchangeable lenses, in particular the zoom and the superzoom for long-distance telephoto shots. You can fine-tune, time and control every X-E4 shot and set up bracketing (multiple exposures processed automatically in iOS and iPadOS) for high dynamic range photos and other photos.

Conclusion

The iPhone 14 Pro offers a compelling alternative to a mirrorless camera that costs about the same for relatively close-up shots with its three lenses. In many cases, you can ditch a mirrorless camera in favor of the iPhone 14 Pro to capture similar shots with the benefits of a multi-purpose device with long battery life and mobile photo and video upload.

This article originally appeared in our sister publication, Macworld.

Tag: iphone design, iphone 14, apple iphone, iphone release