Machine pattern recognition

March 26, 2024

By Martin Ciupek

Reading time: approx. 3 minutes

Researchers at DFKI have developed a solution so that robots can better evaluate objects in context in the future. It will soon be presented in detail at a conference in the USA.

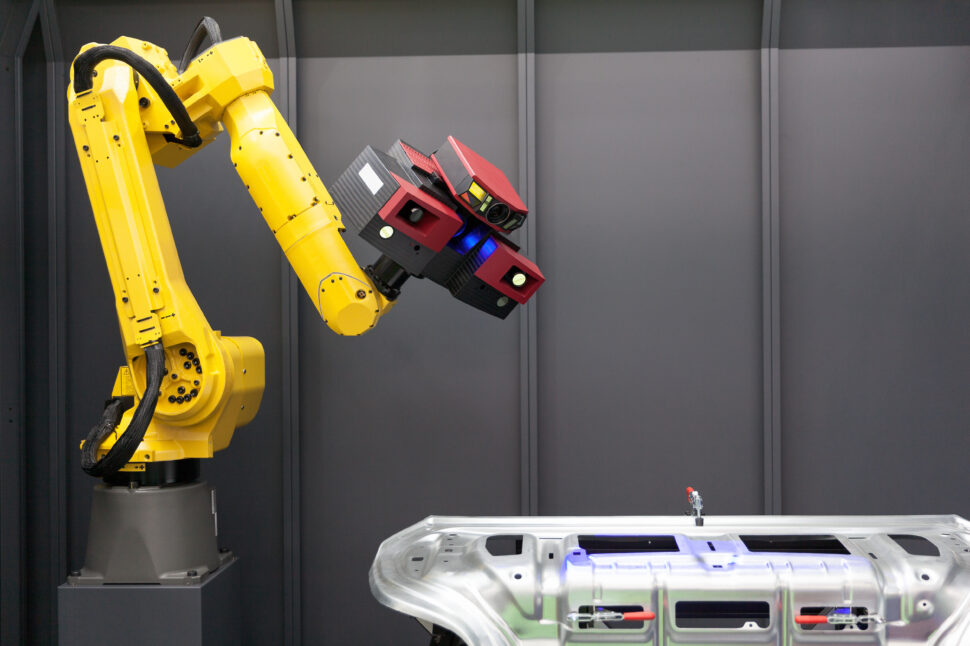

Photo: panthermedia.net/ Mihajlo Maricic

Robots often have difficulty in everyday human environments. The reason: People evaluate what they see in a context. For example, if you see a full glass, you assume that liquid could spill over if you move roughly. So that machines can also learn to orient themselves visually in our living world, scientists at the German Research Center for Artificial Intelligence (DFKI) have developed the Multi-Key Anchor & Scene-Aware Transformer for 3D Visual Grounding (MiKASA). Using machine pattern recognition, it makes it possible to identify and semantically understand complex spatial dependencies and features of objects in three-dimensional space. At this year’s Conference on Computer Vision and Pattern Recognition (CVPR) in Seattle, USA, researchers from the Augmented Vision department are now presenting this solution.

Learning robots: Pattern recognition creates context for objects

People learn to put things into context when they learn language. This goes beyond pure language and helps, for example. B. to understand an intention or reference and to connect it with an object in our living environment. Robots do not yet have these skills, but they can learn them. There are research approaches around the world, but so far they have only come close to human capabilities.

Reading tip: Humanoid robot from Figure AI shows natural interaction thanks to ChatGPT

That is currently changing and the DFKI wants to play an important role with MiKASA. According to the researchers, thanks to a “scene-aware object recognizer”, machines can now draw conclusions from the surroundings of a reference object – and thus accurately recognize the object and define it correctly. So they evaluate things depending on the context and thus gain a nuanced understanding of their surroundings.

A question of perspective: Pitfalls in locating objects

However, the pitfalls lie in the details, for example when it comes to understanding relative spatial dependencies. “The chair in front of the blue monitor” can be “the chair behind the monitor” from another perspective. To make it clear to the machine that both chairs are actually one and the same object, the program works with a so-called “multi-key anchor concept”. This transmits the coordinates of anchor points in the field of view in relation to the target object and evaluates the importance of nearby objects based on text descriptions.

Semantic references can then help to localize the object. Typically, a chair is placed toward a table or has its back against a wall. The presence of a table or a wall indirectly defines the orientation of the chair.

Hit rate for object detection increased by 10%

According to the DFKI, MiKASA achieves an accuracy of up to 78.6% by linking language models, learned semantics and the recognition of objects in real three-dimensional space. Compared to the best previous technology in this area, the hit rate for object detection could be increased by around 10%.

Numerous sensors provide the robot with visual information about the environment, whose data is combined to create an overall impression. As with human eyes, visual areas overlap. In order to generate a coherent picture from the large amount of data, the researchers at DFKI have developed the “SG-PGM” (Partial Graph Matching Network with Semantic Geometric Fusion for 3D Scene Graph Alignment and Its Downstream Tasks). The alignment between so-called three-dimensional scene visualizations (3D scene graphs) is the basis for a variety of applications. For example, it supports “point cloud registration,” in which points from different laser scanners are merged. This helps robots navigate.

Also read: Why the household robot Stretch 3 could become an alternative to humanoid robots

To ensure that this is possible even in dynamic environments with possible sources of interference, SG-PGM links the visualizations with a neural network. The program reuses the geometric elements learned through registration and associates the grouped geometric point data with the semantic features at the node level.

Computer vision: technology for robots will be presented at CVPR 2024

The CVPR 2024 will take place from June 17th to June 21st at the Seattle Convention Center and is one of the most important events in the field of machine pattern recognition. With a total of six different papers, the team led by Didier Stricker, head of the Augmented Vision research department at the DFKI, wants to present, among other things, technologies that can identify objects in three-dimensional space using variable linguistic descriptions and capture and map the environment holistically with sensors.